In-Depth Understanding of AI Infrastructure: Liquid Cooling, Servers, and Optical Modules – U.S. Stock Sub-Sector Breakdown

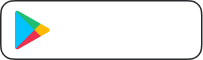

Image Source: unsplash

AI infrastructure is becoming the core driving force behind the global technology industry transformation. The application of liquid cooling technology in data centers is accelerating, with the liquid cooling server market continuing to expand over the past five years; in 2024, China’s liquid cooling server market is expected to reach $20.1 billion, with penetration expected to rise from 5% to 30%-40% in the next three to five years. Server computing power demand continues to explode, driving iteration of high-performance computing architectures. In the optical module field, international competition is intensifying, and technological innovation is triggering a new round of industrial upgrading. Cold-plate liquid cooling is being prioritized for promotion, liquid cooling economics, diversification of AI chips, and the optical module super cycle have all become key elements of industry attention.

Core Key Points

- Liquid cooling technology uses liquid as the cooling medium, offering significantly higher energy efficiency than air cooling and becoming the mainstream choice for data center heat dissipation.

- Server technology continues to evolve, adopting high-performance chips to meet the demands of AI workloads and driving overall computing power improvement.

- Optical module technology is rapidly iterating, with 800G and 1.6T modules becoming mainstream to meet the high bandwidth requirements of AI models.

- Investing in AI infrastructure requires attention to market expansion and technological innovation while remaining vigilant about supply chain risks and policy changes.

- Choosing an efficient financial service platform can improve cross-border capital scheduling efficiency, helping companies flexibly respond in global markets.

For sectors that involve cross-border procurement, equipment settlement, and overseas project spending, the efficiency of capital scheduling often affects execution speed directly. If a company or investor also needs to track payment flows, conversion costs, and related listed names, a multi-asset wallet such as BiyaPay can be used for the practical side of that process, including its exchange rate and converter tool for comparing fiat conversion costs and its stock lookup for following relevant U.S.-listed companies.

BiyaPay also supports international remittance and operates with relevant compliance registrations in jurisdictions including the United States and New Zealand, which makes it more suitable as a supporting tool in discussions around infrastructure expansion, equipment purchasing, and cross-market fund allocation. It does not provide an AI system that automatically detects market signals or executes trades on the user’s behalf, so final decisions still need to come from the user’s own budget planning, procurement cycle, and research.

Liquid Cooling Track

Liquid Cooling Technology Principles

- Liquid cooling technology uses liquid as the cooling medium, possessing far superior heat-carrying and heat-conducting capabilities compared to air.

- Heat exchange efficiency is approximately 20 times that of air cooling, significantly improving data center heat dissipation efficiency.

- This technology helps reduce energy consumption and enhance the overall operational efficiency of AI infrastructure.

Cold-Plate vs. Immersion Cooling Comparison

| Cold-Plate Liquid Cooling | Immersion Liquid Cooling |

|---|---|

| Lower cost, easier to service and retrofit | Higher cost, more complex maintenance |

| Compatible with existing data center infrastructure | Provides excellent thermal performance |

| Suitable for on-site maintenance without system downtime | Entire server immersed in coolant, eliminating hot spots |

Cold-plate liquid cooling has become the currently prioritized mainstream solution due to its ease of retrofitting, cost advantages, and compatibility with existing data centers.

Industry Chain Structure

The liquid cooling industry chain covers coolant, cold plates, pumps & valves, piping, system integration, and operation & maintenance services. BiyaPay provides efficient payment collection & disbursement, international remittance, and real-time digital currency conversion services for global AI infrastructure companies, helping them achieve flexible capital scheduling in the U.S. stock and Hong Kong stock markets to meet diverse needs for cross-border procurement and project construction.

Major U.S.-Listed Companies

- Vertiv

- Asetek

- CoolIT Systems

- LiquidStack

These companies focus on R&D, manufacturing, and integration of liquid cooling systems, continuously promoting the application and implementation of liquid cooling technology in AI infrastructure.

Market Space and Economics

| Year | Market Size (USD Billion) | CAGR |

|---|---|---|

| 2026 | 6 | 18.2% |

| 2035 | 27.1 | 18.2% |

- Continuous growth of AI workloads is driving demand for high-density cooling solutions.

- Sustainability regulations and energy efficiency requirements are accelerating the trend of replacing air cooling with liquid cooling.

- Liquid cooling servers can achieve a PUE as low as 1.15, far better than the 1.35 of traditional air-cooled servers; a 10MW data center can save more than $2 million in electricity costs annually.

- Although the initial investment in liquid cooling systems is higher than air cooling, energy savings result in a 2-3 year payback period.

Liquid cooling technology is shifting from “optional” to “mandatory,” becoming a key enabler for efficient and sustainable development of AI infrastructure.

Server Track

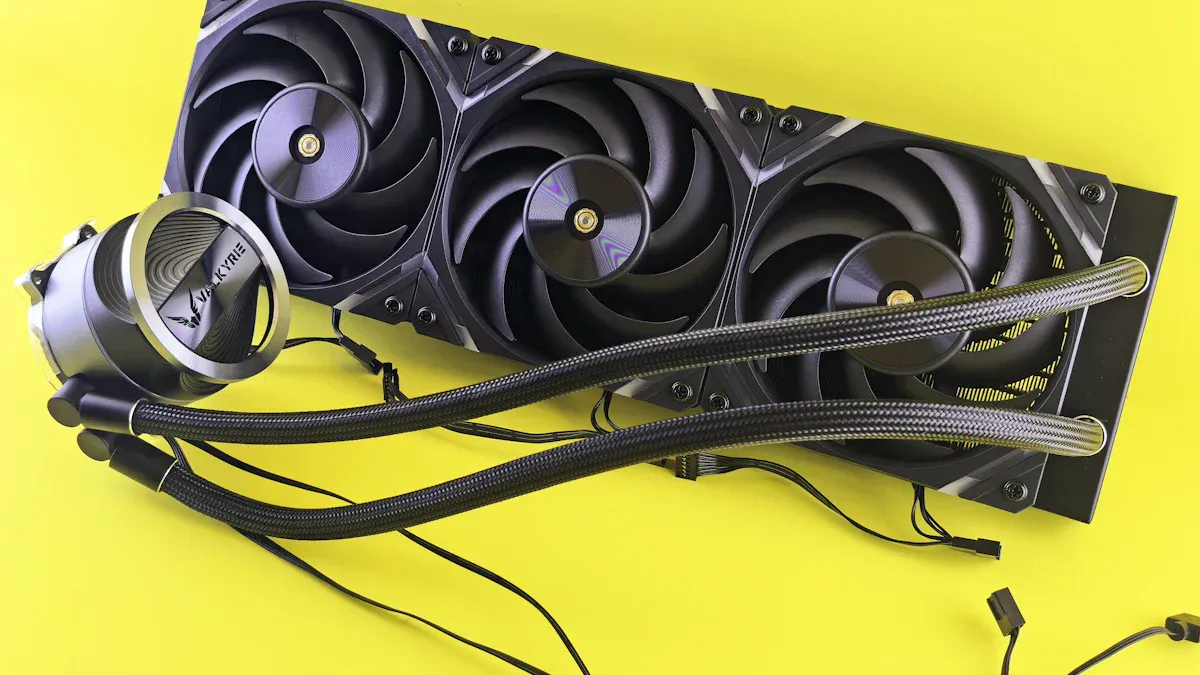

Image Source: unsplash

Server Technology Evolution

Server technology continues to iterate, driving performance improvements in AI infrastructure. In recent years, demand from cloud service providers for hyperscale environments and generative AI workloads has grown significantly. Server architectures are continuously optimized, adopting high-performance chips such as GPUs and ASICs to meet the training needs of complex neural networks and large language models. The table below shows the key drivers of server technology evolution:

| Source | Key Content |

|---|---|

| AI Server Market Size, Share and Trends 2025 to 2030 | Rapid expansion of the AI server market; cloud providers drive demand for hyperscale environments and generative AI workloads. Advances in GPU, ASIC, and machine learning technologies accelerate adoption. |

| AI Server Market Size, Vendor Shares, and Investment Drivers | Hyperscalers such as AWS, Google, Meta, and Microsoft see surging demand for AI server infrastructure, driving investment in GPU and ASIC clusters. |

| AI Server Market worth $837.83 billion by 2030 | Companies focus on custom ASICs to optimize energy efficiency and operating costs, especially in data centers with heavy AI workloads. |

AI Chip Classification

AI servers use various chip types, each serving different roles:

- GPU: Excels at parallel processing, mainly used for AI model training.

- FPGA: Dynamically programmable, suitable for specific AI application scenarios.

- ASIC: Custom-designed for specific purposes, dedicated to AI acceleration.

- NPU: Modern add-on component that assists the CPU in handling deep learning and neural network tasks.

These chips collectively enhance server computing power to meet the high-performance computing demands of AI infrastructure.

Industry Chain Division of Labor

The server industry chain is complex, involving chip design, manufacturing, system integration, operations & maintenance, and financial services. U.S. tariff policies and trade restrictions affect the semiconductor supply chain, forcing server OEMs to adjust strategies to meet global customer needs. Some suppliers opt for “Made in America” configurations to avoid restrictions. Supply chain uncertainty leads to cost pass-through or margin compression, requiring companies to respond flexibly. BiyaPay provides global users with efficient payment collection & disbursement, international remittance, real-time fiat and digital currency conversion, USDT to USD or HKD exchange, U.S. and Hong Kong stock trading deposit/withdrawal support, and digital currency trading services, helping server manufacturers improve efficiency in cross-border procurement and capital scheduling.

Major U.S.-Listed Companies

The U.S. market hosts many leading server companies:

- NVIDIA: Global leader in GPU server market, driving AI training and inference.

- AMD: Provides high-performance CPUs and GPUs supporting diverse AI applications.

- Super Micro Computer: Focuses on high-density AI server system integration.

- Dell Technologies: Offers customized AI server solutions.

These companies continue to innovate, driving technological upgrades in AI infrastructure.

Market Demand and Policy

Global server demand continues to grow, with AI applications driving architectural optimization. Companies are accelerating deployment of servers designed specifically for AI workloads, improving energy efficiency and thermal management. Data centers adopt liquid cooling and advanced airflow designs to meet high-density computing needs. Hybrid deployment models are emerging, combining on-premise servers with cloud infrastructure. U.S. policies promote domestic manufacturing and supply chain security, influencing server industry chain layout. Market demand for high-performance, low-energy-consumption servers will continue to drive related industry chain development.

Optical Module Track

Image Source: unsplash

Optical Module Technology Upgrades

Optical module technology is undergoing rapid iteration, enabling higher bandwidth and energy efficiency in AI infrastructure. In recent years, 800G and 1.6T optical modules have gradually become mainstream in data centers, meeting the extreme bandwidth demands of AI model training and inference. Co-Packaged Optics (CPO) technology integrates optical engines directly into switch ASIC packages, significantly reducing power consumption and latency. The table below summarizes major technical advancements:

| Technical Advancement | Description |

|---|---|

| Bandwidth Increase | 800G and 1.6T optical modules meet AI data center bandwidth needs |

| Energy Efficiency Improvement | CPO technology reduces power consumption from ~30W to 9W, improving efficiency |

| Integration Technology | Optical engine integrated with ASIC, reducing signal loss and power consumption |

| Low Latency | CPO technology can reduce latency by about 50%, meeting AI training and inference needs |

Large AI models such as GPT-4 already exceed one trillion parameters, with AI computing demand doubling every 3-4 months, far outpacing Moore’s Law. Data center interconnect speeds are jumping from 100G to 800G, 1.6T, and beyond, driving sustained growth in demand for next-generation optical modules.

Industry Chain Structure

The optical module industry chain is divided into three levels: component manufacturing, module manufacturing, and distribution & integration. Main structure is as follows:

| Level | Type | Major Companies |

|---|---|---|

| Tier 1 | Component Manufacturers | Lumentum, II-VI Finisar, Sumitomo, Mitsubishi |

| Tier 2 | Module Manufacturers | Cisco, Arista, Juniper, Innolight, Accelink |

| Tier 3 | Distribution & Integration | Arrow Electronics, Avnet, Ingram Micro |

China accounts for 60-70% of global optical module production, Taiwan ~15-20%, and the United States ~10-15%, mainly concentrated in high-end and specialized modules. Companies typically diversify procurement, maintain strategic inventory, and consider local manufacturing for sensitive applications to mitigate supply chain risks.

Major U.S.-Listed Companies

The U.S. stock market hosts many leading optical module companies, including Cisco Systems, Juniper Networks, Intel Corporation, NVIDIA, Broadcom Inc., II-VI Incorporated, Amphenol Corporation, etc. These companies leverage strong R&D capabilities, global manufacturing networks, and efficient supply chains to continuously drive product innovation and market expansion. Leading companies focus on standardized optical modules to reduce infrastructure costs while ensuring high reliability and speed of data transmission. Emerging companies actively participate in market competition through innovations such as photonic integration, miniaturization, and energy-saving designs.

Technology Route Comparison

Current mainstream technology routes include 800G QSFP-DD/OSFP, CPO, and LPO. 800G optical modules have become the standard for intelligent computing centers, with future 1.6T modules expected to adopt CPO to increase bandwidth density. LPO reduces power consumption and latency by eliminating traditional DSP chips, meeting the low-power, low-latency needs of intelligent computing centers. 800G OSFP modules consume approximately 16-20W and require liquid cooling or optimized rack heat dissipation to avoid overheating. Companies like NVIDIA further improve port density and energy efficiency through integrated optical engines. Hyperscale data centers are actively adopting these technologies, driving AI infrastructure toward higher performance.

Global Competitive Landscape

The optical module market is in a super cycle, with global shipments and market size continuing to climb. In 2024, 400G+ optical module shipments are expected to reach 20.4 million units, exceeding 31.9 million units in 2025, with a CAGR of 56.5%. Market size is projected to reach $11.5 billion in 2025 and $47.6 billion in 2035, with a CAGR of 13.8%. China and North America are experiencing rapid growth, with the Asia-Pacific region dominating the global market. The optical module supply chain is divided into component manufacturing, module manufacturing, and distribution & integration layers; companies address geopolitical risks through diversified procurement and local manufacturing strategies. Surging bandwidth demand, low-latency and high-efficiency challenges, and accelerated commercialization of silicon photonics technology are jointly driving the optical module industry into a new prosperity cycle, becoming an indispensable core component of AI infrastructure.

AI Infrastructure Investment Logic

Market Space Analysis

The three major tracks of liquid cooling, servers, and optical modules collectively form the core pillars of AI infrastructure. Market space continues to expand, with investors focusing on high growth and technology-driven innovation. The liquid cooling system market is expected to exceed ¥70 billion in 2026, with a CAGR over 50%. Driven by AI computing power demand, the global server market is projected to reach $837.83 billion by 2030. The optical module industry is in a super cycle, with market size expected to reach $11.5 billion in 2025 and $47.6 billion in 2035, at a CAGR of 13.8%. The U.S. market shows strong demand for high-performance computing and data center upgrades, driving rapid development of related industry chains.

Investors need to focus on capital flow efficiency and exchange rate risk during global capital scheduling and cross-border procurement. BiyaPay provides enterprises with global payment collection & disbursement, international remittance, real-time fiat and digital currency conversion, USDT to USD or HKD exchange, U.S. and Hong Kong stock trading deposit/withdrawal support, and digital currency trading services, helping companies efficiently complete fund settlement and improve procurement and project construction efficiency. Demand for AI infrastructure in the U.S. market continues to grow, and companies can seize market opportunities through flexible capital management.

Policy and Economics

Policy drivers have become an important force in AI infrastructure investment. Major markets such as the United States have introduced various policy incentives to promote data center construction and technology upgrades. The table below summarizes key policy incentive types:

| Policy Incentive Type | Specific Content |

|---|---|

| Financial Support Mechanisms | Launch comprehensive financial support programs, including loans, loan guarantees, grants, tax incentives, etc. |

| Federal Land Utilization | Direct relevant departments to identify and provide federal land suitable for data center development, including military facilities. |

| Environmental Review and Permitting Efficiency | Improve efficiency of environmental reviews and permits through existing and new categorical exclusions, ensuring rapid processing for qualified projects. |

The U.S. government accelerates project approvals through NEPA applicability and FAST-41 mechanisms, improving infrastructure construction efficiency. Policies require new large data centers built by 2025 to achieve a PUE better than 1.3, making liquid cooling technology a key path to policy compliance. Economically, liquid cooling system initial investment is 2-3 times that of air cooling, but significant cooling energy savings shorten the payback period to 2-3 years. In a 10MW data center, liquid cooling solutions (PUE 1.15) can save more than $2 million in annual electricity costs compared to traditional chilled water solutions (PUE 1.35). Liquid cooling systems have a 50% lower failure rate than air cooling, further reducing operation and maintenance costs. Server and optical module technology upgrades improve energy efficiency, lower long-term operating costs, and enhance investment returns.

Companies need to focus on policy compliance and economics during capital management and international procurement. BiyaPay provides global users with real-time exchange rates, digital currency trading services, and U.S. and Hong Kong stock trading deposit/withdrawal support, helping companies respond to policy changes and capital flow needs while improving investment efficiency.

Risks and Mitigation

Investing in AI infrastructure faces multiple risks. Liquid cooling systems carry leakage risks; inadequate design and maintenance may lead to operational failures. Supply chain bottlenecks affect equipment availability and delivery times; increased AI infrastructure demand may cause supply chain disruptions. Growing government regulatory challenges restrict technology choices and flexibility. AI data centers may create 24/7 concentrated power demand, posing challenges to grid operations. Some data center growth areas have experienced harmonic distortion and load warnings, indicating potential risks from power demand. Amid Washington’s push to invest in advanced cooling technologies, the federal government may intervene in data center design decisions, increasing regulation and making infrastructure requirements stricter.

Investors can adopt the following risk mitigation strategies:

| Risk Mitigation Strategy | Description |

|---|---|

| Control Resources | Companies that own tangible resources and master intangible resources are better positioned in competition. |

| Understand Unit Economics | Focus on return on investment, especially after power and capital costs, rather than theoretical total addressable market size. |

| Execution Capability | Ability to quickly deliver promised projects and maintain normal data center operations and availability will be a long-term competitive advantage. |

| De-risking | Secure revenue through long-term contracts, balance counterparty risk, and build flexibility during construction to adapt to changes in technology and demand. |

Companies need to choose efficient and secure financial service platforms during global capital scheduling and procurement. BiyaPay provides users with global payment collection & disbursement, international remittance, real-time fiat and digital currency conversion, USDT to USD or HKD exchange, U.S. and Hong Kong stock trading deposit/withdrawal support, and digital currency trading services, helping companies reduce capital flow risks and improve capital management flexibility. Investors should pay attention to technology upgrades, policy changes, and supply chain security, formulate scientific risk prevention strategies, and capture the long-term value of the AI infrastructure industry.

Future Trends and Opportunities

Technology Innovation Directions

AI infrastructure is ushering in multiple technological innovations. Cold-plate and immersion liquid cooling continue to be optimized, driving data center energy efficiency improvements. In the optical module field, new integration technologies such as CPO and LPO continuously break through bandwidth and power consumption bottlenecks. In server architecture, dedicated AI chips are gradually replacing general-purpose GPUs, prioritizing throughput and energy efficiency. High-performance computing hardware and advanced thermal management systems are becoming core configurations for next-generation data centers. In the future, edge computing and modular micro data centers will further promote localized deployment to meet real-time AI inference needs.

Industry Consolidation

Industry consolidation trends are evident. Leading companies improve supply chain layouts and raise technical barriers through mergers, acquisitions, and collaborations. Server, liquid cooling, and optical module vendors accelerate cross-domain collaboration to promote end-to-end solution implementation. Supply chain diversification has become an important strategy to address geopolitical risks. Some companies choose local manufacturing and strategic inventory to ensure continuous supply of critical equipment. Industry consolidation helps improve overall innovation capabilities and market responsiveness.

Emerging Application Scenarios

Future AI infrastructure demand will be driven by diversified application scenarios:

- Large language models drive improvements in natural language processing capabilities

- Real-time AI inference factories become a new form of data center

- Neocloud providers focus on AI computing needs

- Increased demand for high-performance computing dedicated data centers

- Advanced thermal management systems become standard

- Local modular micro data centers meet edge computing needs

- Dedicated chips optimize energy efficiency and throughput

These emerging scenarios place higher requirements on high-performance computing hardware, thermal management, and power distribution, driving continuous upgrading of the industry chain.

Investment Recommendations

Investors should focus on the long-term value brought by AI infrastructure technology innovation and industry consolidation. The U.S. market shows strong demand for high-performance computing and data center upgrades, with related companies possessing sustained growth potential. It is recommended to prioritize companies with core technologies, robust supply chains, and strong execution capabilities. During capital scheduling and cross-border procurement, choosing efficient and secure financial service platforms helps improve capital management efficiency. Investment decisions should balance technology trends, policy environment, and supply chain security to scientifically capture industry opportunities.

The three major tracks of AI infrastructure—liquid cooling, servers, and optical modules—have become the core pillars driving high-performance computing and intelligent application implementation. Technological evolution continues to break through bandwidth, energy efficiency, and computing power bottlenecks, while industry chain upgrades and policy incentives jointly shape the global competitive landscape. Investors should focus on market space expansion, core company innovation capabilities, and technology route evolution while remaining vigilant about supply chain and energy consumption risks. In the future, with the widespread adoption of 1.6T optical modules and CPO and other new technologies, AI infrastructure will continue to release value. It is recommended to continuously monitor industry dynamics and innovation breakthroughs.

FAQ

Why has liquid cooling technology become the mainstream for data center heat dissipation?

Liquid cooling technology offers highly efficient heat exchange capabilities, significantly reducing energy consumption and improving PUE. As AI computing density increases, liquid cooling solutions are gradually replacing traditional air cooling and becoming the mainstream for data center heat dissipation.

How do AI server chips affect computing power and energy efficiency?

AI servers use various chips including GPU, FPGA, ASIC, and NPU. Different chip types optimize parallel computing, energy efficiency, and throughput, continuously improving AI infrastructure performance.

What impact does optical module technology upgrade have on data centers?

Optical module bandwidth has increased to 800G and above, meeting the training and inference needs of AI models. New integration technologies such as CPO and LPO reduce power consumption and latency, driving efficient data center operations.

What risks should be considered when investing in AI infrastructure?

Investors need to pay attention to technology upgrades, supply chain security, policy changes, and energy consumption risks. Reasonable planning of capital flows and procurement strategies helps reduce operational and management risks.

How to efficiently conduct cross-border capital scheduling and procurement?

Companies can choose professional financial service platforms that support global payment collection & disbursement, international remittance, real-time exchange rate conversion, and digital currency trading. Licensed banking scenarios in Hong Kong help improve capital management efficiency.

*This article is provided for general information purposes and does not constitute legal, tax or other professional advice from BiyaPay or its subsidiaries and its affiliates, and it is not intended as a substitute for obtaining advice from a financial advisor or any other professional.

We make no representations, warranties or warranties, express or implied, as to the accuracy, completeness or timeliness of the contents of this publication.

Related Blogs of

Preventing Systemic Financial Risks in the AI Era: Contingency Plans for Global Payment Networks to Counter Ultra-High-Frequency Automated Attacks

Staying Rational Amid the AI Surge: Respect the Market, Respect the Rules, and Firmly Retain Ultimate Control Over Your Assets

Will AI Make Programmers Unemployed? Either Way, Buy AI Company Stocks as a Hedge First

Lock Down Your Overseas Funds: Set Independent Transaction Password, Withdrawal Limits, and Device Binding

Choose Country or Region to Read Local Blog

Contact Us

BIYA GLOBAL LLC is registered with the Financial Crimes Enforcement Network (FinCEN), an agency under the U.S. Department of the Treasury, as a Money Services Business (MSB), with registration number 31000218637349, and regulated by the Financial Crimes Enforcement Network (FinCEN).

BIYA GLOBAL LIMITED is a registered Financial Service Provider (FSP) in New Zealand, with registration number FSP1007221, and is also a registered member of the Financial Services Complaints Limited (FSCL), an independent dispute resolution scheme in New Zealand.