Don't Let Open Source Code Become a Data Theft Backdoor: The Necessity of Reviewing and Running Unknown AI Projects in a Sandbox Environment

Image Source: pexels

Open source code brings convenience to AI projects, but it also conceals serious security risks. Hackers frequently exploit backdoors and remote control methods to launch attacks. Data from 2024 shows that more than 75% of software supply chains experienced cyberattacks in the past 12 months. The table below reflects the growth trend of related incidents in recent years:

| Year | Growth Percentage | Number of Incidents |

|---|---|---|

| 2021 | 650% | 12,000 |

Security teams should prioritize the review process, placing unknown AI projects in a sandbox environment for execution to prevent data leaks and information theft.

Core Key Points

- Although open source code is convenient, it may hide security risks and requires careful review.

- Running unknown AI projects in a sandbox environment can effectively prevent data leaks and the impact of malicious code.

- Regularly update and review third-party libraries to ensure the code used is the latest and secure.

- Using static analysis tools can detect security vulnerabilities in the code in advance and reduce risks.

- Establish a systematic security management process to ensure the team always focuses on security issues during development.

Security Risk Analysis

Image Source: pexels

Open Source Code Backdoor Risks

Open source code provides abundant resources for AI projects, but it has also become a high-incidence area for attackers to implant backdoors. Attackers often use methods such as data poisoning, label poisoning, and weight-level manipulation to silently embed hidden dangers in models or their dependencies. The following table summarizes common backdoor types and their manifestations:

| Backdoor Type | Description | Example |

|---|---|---|

| Data Poisoning | Malicious samples are injected into training data to subtly bias the model or introduce targeted backdoors. | A GPT chatbot is deliberately trained with contaminated conversations, triggering unsafe behavior on specific inputs. |

| Label Poisoning | Attackers alter labels of training samples, especially during fine-tuning, which can subtly tamper with a clean base model. | A sentiment analysis model is fine-tuned with mislabeled reviews, where positive text is deliberately labeled as “negative.” |

| Weight-Level Manipulation | Attackers directly manipulate neural network weights and biases to insert malware. | By modifying a small number of model weights, arbitrary binary malicious code is embedded into the pre-trained model weights. |

| Deserialization Remote Code Execution | Some model formats can execute code, and attackers embed malicious code in serialized model files. | A payload embedded in a .pkl file opens a reverse shell connecting to the attacker’s machine upon loading. |

| Malicious Code in Model Containers | Attackers bundle malicious scripts or binaries in containers, leading to code execution. | The model container includes a malicious on-start.sh script that steals environment variables or credentials. |

Attackers also deeply embed malicious behavior into AI systems by poisoning training data, directly tampering with model architecture or weights. Once triggered, these backdoors can lead to sensitive data leaks, remote system control, or even large-scale security incidents.

Security Challenges of Unknown AI Projects

Unknown AI projects face more complex security challenges due to the lack of mature security standards and transparent development processes. The main risks include:

- AI projects lack standardized security frameworks, making security assessment difficult.

- The decentralized AI development model leads to the “shadow AI” phenomenon, where some systems are hard for security teams to discover and supervise.

- AI systems are highly vulnerable to adversarial attacks; attackers can influence model outputs through minor perturbations, endangering system integrity.

- AI-generated code and prompt injection create new attack surfaces, allowing attackers to induce models to leak sensitive information.

- When users interact with models that have access to sensitive data, prompt engineering may cause information leakage.

- Malicious websites can exploit the characteristics of AI models processing sensitive data to initiate data theft or system destruction.

If AI models used in automated tasks are not strictly reviewed, they can easily become entry points for attackers. New attack methods such as model inversion and membership inference make the data security situation even more severe.

Software Supply Chain Attack Methods

Software supply chain attacks have become one of the main threats to open source code security. Attackers penetrate the dependency chain of AI projects through various methods. Common techniques are shown in the table below:

| Attack Type | Description |

|---|---|

| Dependency Confusion | Attackers create compromised packages with higher version numbers for automatic exploitation. This was the most common attack type in 2021. |

| Typosquatting Attacks | Attackers create packages with names differing by only one character from popular packages, hoping developers mistype them. |

| Malicious Code Injection | New code is added to open source software packages, affecting anyone who runs that code. Such attacks decreased somewhat in 2021. |

Attackers also exploit pre-trained models or supply chain trojans, inserting backdoors into shared model checkpoints so that developers unintentionally inherit the trojan during fine-tuning or reuse. In federated learning scenarios, malicious participants upload poisoned model updates, gradually teaching the global model to hide malicious behavior.

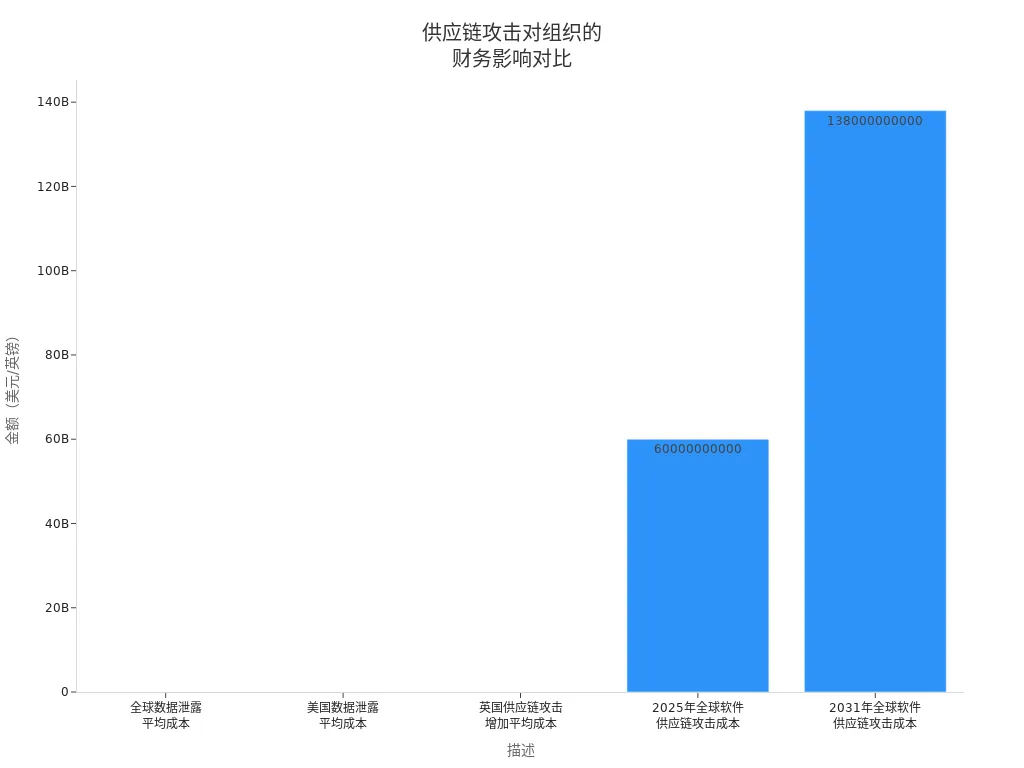

Supply chain attacks not only affect technical security but also cause enormous economic losses. Data shows that the global cost of software supply chain attacks is expected to reach $60 billion in 2025 and rise to $138 billion by 2031.

The widespread use of open source code continues to expand the impact scope of supply chain attacks. When enterprises and individuals introduce new projects, they must remain highly vigilant about every link in the supply chain to prevent the spread of security risks.

Data Leakage Risks

Data Leaks Caused by Backdoor Code

Backdoor code often becomes a direct cause of data leaks. By implanting covert backdoors in open source code, attackers can remotely access sensitive information without users noticing. During model training, if the dataset contains confidential or copyrighted data, this content may be unexpectedly exposed in model responses. Many AI platforms store and analyze user inputs, making business data, passwords, or personal secrets entered during interactions with AI models highly susceptible to being recorded and misused. In addition, cross-border data transmission brings compliance risks; transferring user data to China may violate GDPR and CCPA and other international laws and regulations. Enterprises need to pay special attention to data flows and storage compliance.

Enterprise and Personal Information Security Threats

Enterprises and individuals face different information security threats when using AI projects. In enterprise environments, open source software integrates with multiple systems, significantly expanding the attack surface; hackers can penetrate systems through multiple links in the supply chain. Enterprises need to establish comprehensive security education systems to enhance employee risk awareness and reduce data leaks caused by improper operations. Individual users usually lack professional security support and are prone to exposing privacy when using AI tools. Although the openness of the open source supply chain improves code transparency, it also means anyone can contribute code, making the risk higher for enterprises when introducing new components.

Common high-risk information includes:

- Privacy data entered by users (such as trade secrets, account passwords)

- Confidential or incorrect data in training datasets

- Legally protected personal information, especially during cross-border transmission

Analysis of Typical Leakage Incidents

In recent years, multiple data leakage incidents have highlighted weak links in AI project security management. For example, the EchoLeak incident revealed that AI models can leak sensitive information by integrating emails, documents, and chat data, emphasizing the importance of security protection at AI integration points. Leaks of AI-related keys and credentials frequently appear in public code repositories, as developers often overlook security best practices when handling AI projects.

The table below summarizes the main lessons from typical incidents:

| Lesson | Explanation |

|---|---|

| Manage Secrets | Many companies still store large amounts of secrets in internal code repositories, lacking visibility into where secrets go. |

| Social Engineering Attacks | Phishing attacks targeting users remain a major risk; multi-factor authentication cannot completely prevent leaks. |

| Automated Detection | Lack of automated secret detection and remediation mechanisms makes exposed secrets in source code blind spots for lateral movement. |

In addition, well-known companies such as Uber, Scotiabank, Mercedes-Benz, and xAI have suffered losses due to key leaks in public code repositories. These cases remind enterprises and developers to attach great importance to security protocols for third-party applications and partners, ensuring strict security measures for all links accessing sensitive data.

Review and Sandbox Execution Process

Image Source: pexels

Open Source Code Security Review Steps

Security review is the first line of defense against open source code risks. When teams introduce new projects, they should follow a systematic review process to minimize security risks as much as possible. Common security review steps include:

- Minimize external dependencies to reduce the introduction of unknown risks.

- Regularly review and update third-party libraries to ensure all dependencies are the latest versions with known vulnerabilities fixed.

- Manage code repository access permissions, adopt multi-factor authentication, and promptly revoke access for members no longer participating in the project.

- Add a SECURITY.md file to the project to clarify security information and known vulnerabilities, facilitating team tracking and response.

- Regularly use static code analysis tools and dependency vulnerability scanners to conduct comprehensive scans and security reviews of the code repository.

These measures help teams detect potential threats early in the project and prevent backdoors and malicious code from entering the production environment. For AI projects, security reviews must also focus on the integrity of model files, datasets, and dependency packages to avoid security incidents caused by data poisoning or model tampering.

Static Analysis and Sandbox Execution

Static analysis and sandbox execution are core methods for identifying and isolating security threats. Static analysis scans the entire code base before code execution, detecting known vulnerability patterns and logical errors, helping developers fix issues early in the development stage. This proactive defense approach significantly reduces the risk of vulnerabilities being exploited in production. Teams can automatically trigger static analysis on every code commit or pull request to ensure all issues are resolved before code merging.

In practice, professional teams often use the following tools to enhance open source code security:

| Tool Name | Function Description |

|---|---|

| AI-driven code review tools | Automatically detect errors, security vulnerabilities, and performance issues to improve code quality and security. |

| DeepCode | Includes 25 million data flow cases, supports multiple languages, and helps teams identify and fix critical security defects. |

| Trivy | Detects vulnerabilities and security issues throughout the software development lifecycle, supporting Infrastructure as Code (IaC) scanning. |

| Graudit | Lightweight static code analysis tool that identifies common security vulnerabilities in source code. |

AI-driven code review tools can catch errors before production; DeepCode continuously updates its vulnerability database and provides fix suggestions. Trivy can identify insecure settings and access control issues in configuration files, while Graudit excels at detecting common vulnerabilities such as SQL injection and XSS. Through these tools, teams can discover and fix security risks early in development.

Sandbox execution provides an isolated runtime environment for unknown AI projects. Teams can transfer files to be analyzed to a directory mounted in a Docker container, using containerization technology to achieve resource isolation. By configuring CPU and memory limits for the container, malicious code is prevented from consuming excessive resources. Using rootless Docker or stricter runtime configurations can further enhance security and reduce the risk of sandbox escape.

Sandbox Environment Setup and Configuration

Building a secure sandbox environment is a key step in preventing the spread of open source code threats. When teams deploy AI project testing environments, they should focus on the following configuration points:

- Implement multi-layer network isolation to create independent network segments for AI workloads, combining private and public subnets to prevent lateral penetration.

- Establish proxy identity management mechanisms, assigning encrypted identities to each proxy and using time-limited access tokens to enhance access security.

- Configure resource limits and quotas, reasonably setting upper limits for CPU, memory, and other resources to prevent malicious code from consuming system resources.

- Deploy runtime monitoring and behavior analysis to track AI model runtime metrics in real time, configure anomaly detection and alerts, and promptly identify abnormal behavior.

- Adopt a progressive deployment strategy, running new versions in shadow test mode in production to reduce launch risks.

To prevent sandbox escape, teams should also use micro virtual machine technology to enhance isolation, strictly control network egress, and block access to arbitrary external websites. File write and read scopes should be restricted to prevent code from accessing or modifying files outside the workspace. Sandboxing the entire integrated development environment and isolating the sandbox kernel from the host kernel through virtualization technology can further improve the security level. For high-risk operations, explicit user approval should be required to ensure every step is traceable and controllable.

Through the above measures, teams can effectively isolate potential threats when introducing and testing open source code, safeguarding data and system security. Sandbox environments are not only suitable for AI projects but also for security testing and verification of various sensitive applications.

Protection Recommendations

Developers: Securely Introducing Open Source Code

When integrating open source code, developers need to follow a series of security guidelines. Teams should minimize external dependencies as much as possible, regularly review and update third-party libraries, and ensure all components use the latest security patches. Secure coding practices are crucial; sensitive data must be handled properly to avoid hardcoding credentials in code. Automated security testing should become standard, with static code analysis tools and dependency vulnerability scanners effectively identifying potential risks. Developers also need to manage code repository access permissions, regularly rotate SSH keys and personal access tokens, and add security information files to projects. If a project also involves cross-border payments, funding flows, or exchange-rate handling, teams should review not only the code itself but also whether they can rely on services with clear boundaries and transparent compliance disclosures, instead of assembling high-risk payment logic on their own. A service such as BiyaPay, positioned as a multi-asset wallet, covers remittance, fund management, and trading-related scenarios; its exchange rate converter can also be used for basic cost checks.

The point here is not to replace security review, but to keep sensitive steps such as account handling, conversion, and remittance inside a more standardized framework. BiyaPay holds relevant financial registrations in jurisdictions including the United States and New Zealand, so it works better here as a compliance-oriented example of reducing self-built workflow risk, rather than as any kind of built-in security audit or AI automation tool.For scenarios involving money transfers or international remittances, BiyaPay provides global collection and payment services with real-time exchange of fiat and digital currencies, supporting deposits and withdrawals for US stocks and Hong Kong stocks, helping developers achieve efficient integration at the compliance and security levels.

If a project also involves cross-border payments, funding flows, or exchange-rate handling, teams should review not only the code itself but also whether they can rely on services with clear boundaries and transparent compliance disclosures, instead of assembling high-risk payment logic on their own. A service such as BiyaPay, positioned as a multi-asset wallet, covers remittance, fund management, and trading-related scenarios; its exchange rate converter can also be used for basic cost checks.

The point here is not to replace security review, but to keep sensitive steps such as account handling, conversion, and remittance inside a more standardized framework. BiyaPay holds relevant financial registrations in jurisdictions including the United States and New Zealand, so it works better here as a compliance-oriented example of reducing self-built workflow risk, rather than as any kind of built-in security audit or AI automation tool.

Users: Risk Identification and Prevention

Users need to understand the relationship between risks and business, enhancing security awareness. Teams should ensure that the libraries and distributions used undergo strict review, selecting only trusted code repositories that are regularly updated. Tracing data sources and conducting behavior testing help identify potential triggers. Powerful dependency management and threat modeling frameworks (such as MITRE ATLAS) can enhance overall protection capabilities. Automated code scanning and auditing tools provide users with real-time security assurance. Maintain project transparency, actively participate in the open source community, and promptly obtain the latest threat information. When users engage in cross-border payments or digital currency transactions, they can choose BiyaPay for real-time exchange and global collections to avoid financial risks caused by non-compliant platforms.

Team Security Management Process

Teams need to establish a systematic security management process. First, strategic alignment ensures AI projects align with organizational goals, budgets, values, and ethics. In the risk management phase, teams use multi-dimensional methods to identify, assess, and prioritize AI-specific risks. During the control implementation phase, teams deploy automated tools and access controls to protect sensitive data. The formulation of policies, standards, and procedures ensures data quality, privacy, and cybersecurity. Governance structures oversee project development, deployment, and operations; technical feasibility assessments ensure architecture compatibility with existing infrastructure. Resource allocation is reasonable, with performance and security monitoring continuously tracking AI effectiveness. Teams strengthen shared responsibility through continuous improvement mechanisms and stakeholder communication. Compliance monitoring tools automatically flag potential issues, and community-driven security practices improve overall protection levels. For money flow scenarios, teams can leverage BiyaPay to achieve global collections/payments and digital currency exchange, ensuring compliance and security.

Strict security reviews and sandbox execution provide a solid barrier against open source code becoming data theft backdoors. Standardized processes and professional tools bring multiple advantages:

- Repeatability ensures uniform AI risk assessment standards, improving project consistency

- Audit readiness satisfies compliance requirements through documented processes

- Cross-team alignment promotes collaboration among security, data science, and legal teams

| Evidence Type | Specific Data |

|---|---|

| Open Source Component Usage Rate | 97% of organizations use open source AI models in development |

| High-Risk Vulnerabilities | 78% of code repositories contain high-risk vulnerabilities |

| Software Supply Chain Attacks | 65% of organizations have experienced supply chain attacks |

AI proactive defense strategies improve threat identification and response speed, helping organizations prevent data leaks in advance. Developers and users should continuously enhance security awareness, proactively defend against new threats, and safeguard data security.

FAQ

Why is open source code review important?

By reviewing open source code, teams can promptly detect potential backdoors and security vulnerabilities, preventing malicious code from entering the production environment and protecting data and system security.

How does a sandbox environment prevent the spread of security threats?

A sandbox environment provides an isolated space for unknown AI projects, restricting code access to system resources and monitoring abnormal behavior, effectively preventing malicious code from affecting the host and network.

How to reduce data leakage risks?

Teams adopt static analysis, automated detection, and permission management to promptly fix vulnerabilities, protect sensitive data, and reduce information leaks caused by backdoors or configuration errors.

What scenarios is BiyaPay suitable for?

BiyaPay supports global collections and payments, international remittances, real-time exchange between fiat and digital currencies, and is suitable for Chinese-speaking users’ needs in US stock and Hong Kong stock trading deposits/withdrawals as well as digital currency transactions.

*This article is provided for general information purposes and does not constitute legal, tax or other professional advice from BiyaPay or its subsidiaries and its affiliates, and it is not intended as a substitute for obtaining advice from a financial advisor or any other professional.

We make no representations, warranties or warranties, express or implied, as to the accuracy, completeness or timeliness of the contents of this publication.

Related Blogs of

What Is the Limit for Personal Overseas Remittances? Reporting, Tax, Settlement and Risk Control Explained

What to Do When Your OpenAI API Balance Is Not Enough? Top-Up Amount, Billing Rules, and Budget Control

Choose Country or Region to Read Local Blog

Contact Us

BIYA GLOBAL LLC is registered with the Financial Crimes Enforcement Network (FinCEN), an agency under the U.S. Department of the Treasury, as a Money Services Business (MSB), with registration number 31000218637349, and regulated by the Financial Crimes Enforcement Network (FinCEN).

BIYA GLOBAL LIMITED is a registered Financial Service Provider (FSP) in New Zealand, with registration number FSP1007221, and is also a registered member of the Financial Services Complaints Limited (FSCL), an independent dispute resolution scheme in New Zealand.